April 21, 2016

While “Facebook and the Potential for Social Engineering” sounds like the worst Harry Potter book ever, it is actually a fairly frightening possibility.

The potential abuse of something as powerful as a social media network is a reality we have explored before, Facebook in particular have gone as far as actually experimenting with the power of their own influence.

Back in 2012 Facebook researchers tweaked the feed algorithm of 689,003 users to show a disproportionate number of positive or negative statuses for one week, and published a report showing the just how powerful that influence was.

This has been called the “emotional contagion” effect by people who, I assume, make rats run in mazes.

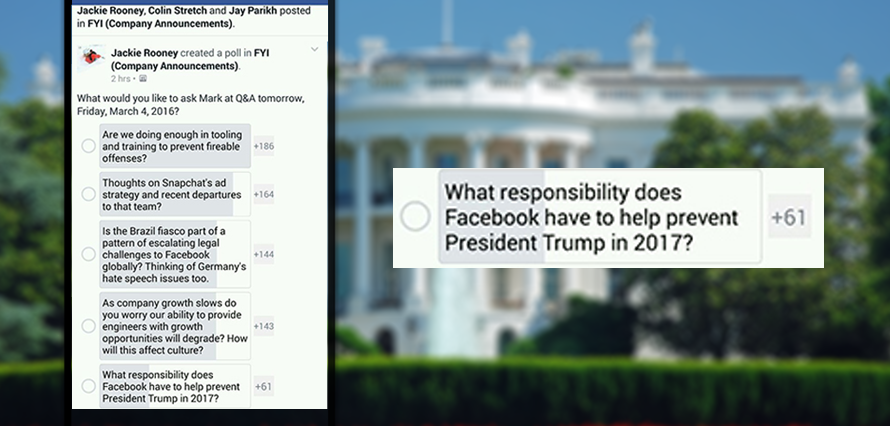

This power has not gone unnoticed by staff at Facebook HQ who last month used a company poll to ask mighty king Zuckerberg whether the company should try “to help prevent President Trump in 2017.”

(Put tin foil hat on now)

Image credit:Gizmodo

Unsurprisingly with over $1bn spent in online political advertising in 2016, social really could sway the outcome in a big way.

While few doubt whether it is possible to influence a population into voting one way or another it seems that a bigger question is now being raised; Should it be done?

Interestingly, Facebook could technically do it with no legal ramifications at all, but there is seemingly little financial motivation to do so.

As Facebook slowly engulfs the internet, bringing in more and more content from external sources, it is struggling with “context collapse” or the decline in people sharing original, personal content which helps shape each online persona and create a viable audience for advertisers.

“What’s that got to do with social engineering?” I hear you mutter.

Well, smarty pants, if you can influence your audience so strongly that you can effectively control how they feel, then collecting data to define how they feel (reactions etc.) becomes pretty circular if not downright redundant.

At what point does data collection lose out to content curation?

I guess we will find out in November.